The Age of Agentic Alignment: Why Leaders Must Manage Humans and AI Agents

By Jyothsna Santosh

AI & Data Science Leader | Human-Centered Innovation | Banking, Retail & Healthcare | Shaping Scalable, Trusted Intelligence Systems

October 8, 2025

Introduction — The Shift in Leadership

Leadership has always been about alignment. The task of leaders is to bring people together around a common vision and strategy. But today, alignment requires something new.

AI agents are increasingly shaping decisions in pricing, fraud detection, personalization, and operations. These systems don’t bring intent, values, or judgment. What they do bring is speed and optimization power that can transform entire industries.

That’s why strategic leadership is evolving. It’s no longer enough to align people. Leaders must now align people and AI agents within a single system of strategy, oversight, and accountability.

Why Alignment Matters More Than Ever

When people misalign, the results are familiar: teams pull in different directions, metrics are gamed, and strategy drifts. Leaders know how costly this can be.

AI agents introduce a parallel risk. They don’t misalign out of malice—they misalign because they optimize narrowly. Left unchecked, they can maximize clicks while undermining trust, cut losses but introduce bias, or drive local gains at the expense of the bigger picture.

In both cases — people and agents — the effect is the same: strategy is compromised. Leadership must prevent this drift by embedding alignment into the system itself.

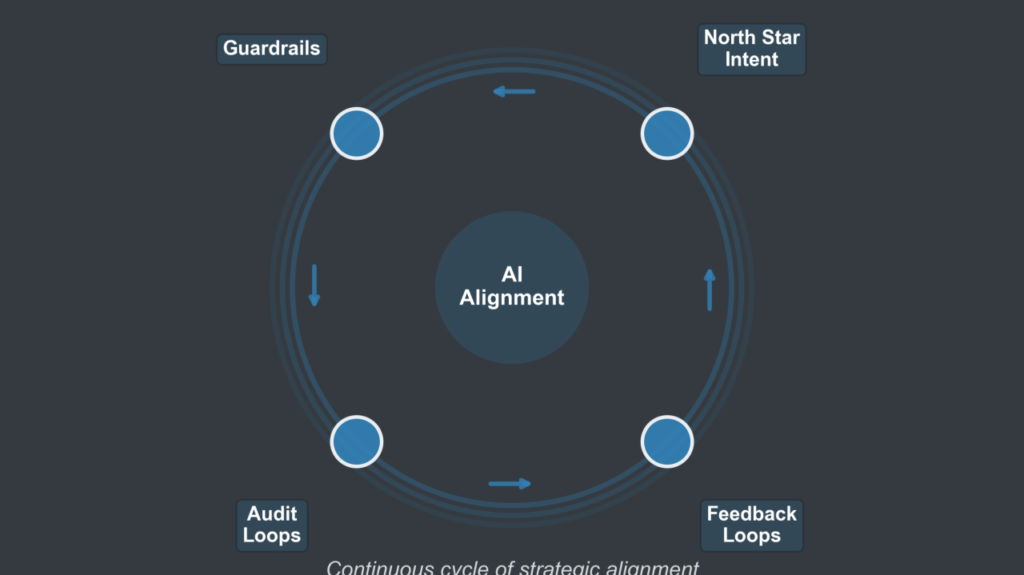

The Alignment Flywheel for AI Agents

To make alignment actionable, leaders need more than reports and dashboards. They need a repeatable cycle that keeps strategy and execution in sync. I call this the Alignment Flywheel for AI Agents:

1. North Star Intent

Leaders must articulate outcomes that reflect business goals and human values. It’s not enough to say “maximize revenue.” A stronger intent might be: maximize lifetime value while protecting customer trust.

2. Guardrails

Clear boundaries ensure AI operates responsibly. Compliance, ethics, fairness, and brand constraints define where optimization must stop. Guardrails protect against unintended consequences.

3. Audit Loops

Transparency is essential. Regular reviews, dashboards, explainability checks, and independent audits ensure problems are caught early and learning is visible to everyone.

4. Feedback

Alignment is not static. Feedback closes the loop — humans refine strategy while agents adjust behavior. This keeps the system evolving rather than locking into harmful patterns.

Together, these four steps create a flywheel: Intent → Guardrails → Audit → Feedback → Intent. Each turn reinforces alignment.

Applying the Flywheel in Different Industries

While the flywheel applies universally, the emphasis shifts depending on the context:

- Finance: Guardrails are defined by regulation and systemic risk. Alignment protects stability.

- Healthcare: Ethics and compliance dominate, ensuring safety, fairness, and trust.

- Retail: Speed and customer experience take center stage, but guardrails prevent erosion of trust.

- Capital Markets / Payments: Alignment ensures fraud routing, risk scoring, and personalization stay within regulatory and brand boundaries.

Leadership is always contextual. The flywheel provides the structure, but leaders must tune it to their industry’s unique risks and mission.

The Leadership Imperative

The point is not to equate AI agents with people. People bring context, values, and judgment; agents don’t.

The point is that leaders must design conditions where humans own the strategy, and agents extend it responsibly. That means embedding clear intent, strong guardrails, continuous audits, and responsive feedback into daily practice.

Leadership in the age of AI is not only about setting vision. It’s about system design — making sure that people and agents reinforce one another instead of drifting apart.

Conclusion — The Real Test of Alignment

The true test of leadership today is not whether an organization uses AI. It’s whether that AI is aligned.

The organizations that thrive will be the ones that turn alignment into a leadership ritual — where intent, guardrails, audits, and feedback form a continuous cycle.

Because if agents aren’t aligned, neither is the strategy.

And in that alignment, leadership finds its most human form.

About the Author

Jyothsna Santosh is an AI and Data Science leader who builds systems where intelligence scales — and leadership stays profoundly human.

Further Reading

- Satya Nadella, “Empowering Responsible AI Leadership,” Microsoft WorkLab (2024)

- Harvard Business Review, “The AI Trust Gap,” March 2024

- McKinsey & Company, “Rewiring Organizations for AI: From Tools to Transformation,” 2025

- DeepMind, “The Alignment Problem in Practice,” Research Report, 2023

- Jyothsna Santosh, “The Alignment Flywheel for AI Agents” (LinkedIn, 2025)